Google Autocomplete Pushed Civil War narrative, Covid Disinfo, and Global Warming Denial

Despite few people actually searching for that

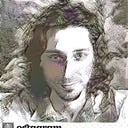

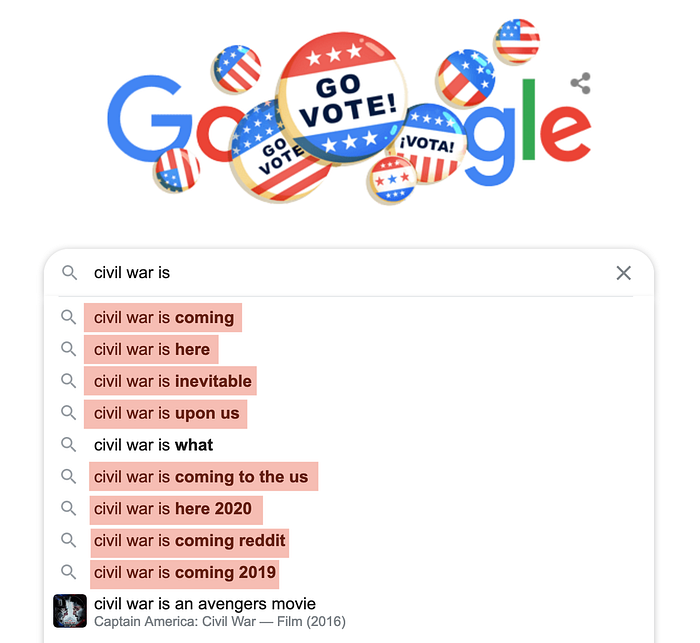

Here’s what Google looked like for months at the end of 2020 and on January 6th before the invasion of the capitol: (all searches were done in incognito from NYC)

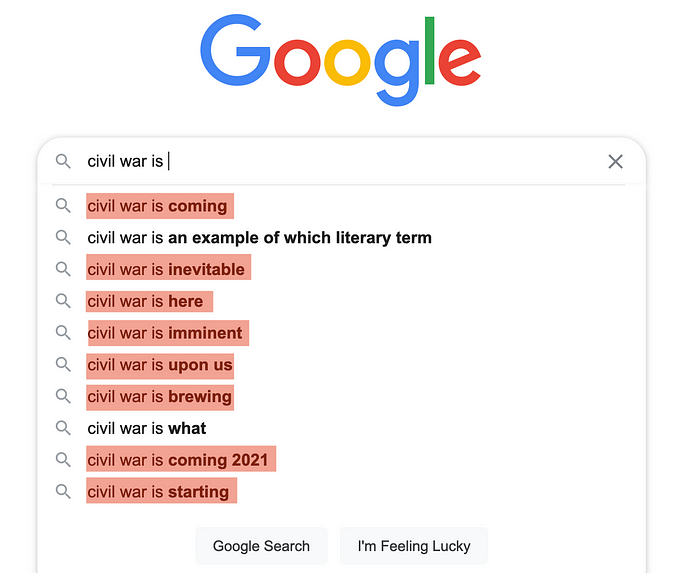

The first thought that came to my mind was: maybe users are looking for these terms, and the algorithm is just a “mirror of society”? But Google’s own data tells another story. According to Google trends, and in the month before the capitol invasion, “civil war is what” was searched:

- 17 times more than “civil war is coming”

- 35 times more than “civil war is here”

- 52 times more than “civil war is starting”

- 113 times more than “civil war is inevitable”

- 175 times more than “civil war is upon us”

“civil war is brewing” “civil war is coming 2021” and “civil war is imminent” have search volumes that are so low that Google trends doesn’t let us compare them. So why did Google promote them?

This demonstrates that Google autocomplete results can be completely uncorrelated to search volumes.

On algotransparency.org, we have been monitoring tens of autocomplete results daily since the US election. These results have been consistent for these last two months. You can access them here.

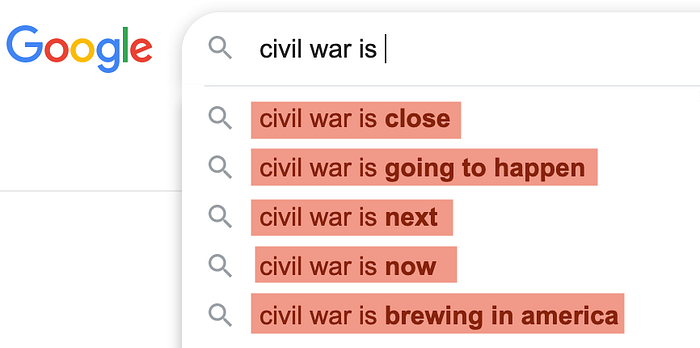

So when did Google changed its autocomplete suggestions? Only after I tweeted about it, following the capitol invasion:

The results are now not as bad “civil was is close” “civil war is next”. But when I did a click on “civil war is close”, then redo a search (this time not in private mode), the results change and become:

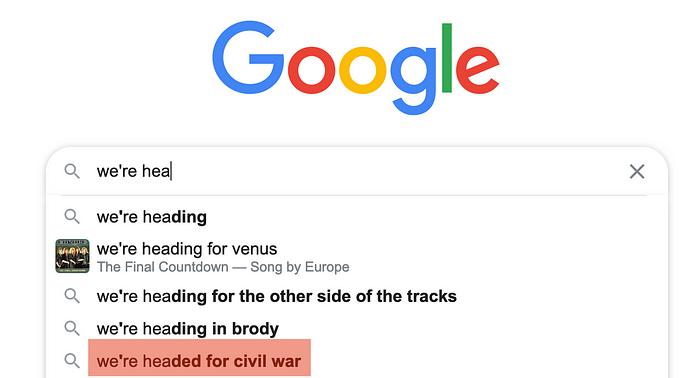

Google is also serving you Civil War when you’re looking for completely different things. For instance, if you start typing “we’re hea” in Google, here is what you get:

In French and Spanish, Google is also pushing similar suggestions (civil war is close, civil war is at our doors, etc…). For instance, if you start with “soon the” (in French “bientot la”) Google autocompletes to “soon the civil war in France” (bientot la guerre civile en France). Despite the popular French expression “bientot la retraite” having much, much, much more searches.

Why is Google promoting search terms that are less searched?

We don’t even know which AI is used for Google autocomplete, neither what it tries to optimize for. It might be that Google tries to reduce the number of keystrokes typed. Fear mongering results, or on the other side, “feel good results” (see covid results lower) could lead people to click faster, leading to some results being favored.

How much favored? If you assume that the most searched query would be shown first, then the autocomplete favors “civil war is coming” by at least a factor of 17 and “civil war is upon us” by a factor of 175.

I would love to have the insights of Timnit Gebru on these results, since she worked at Google on the ethics of the text generative AIs that are probably used in Google autocomplete.

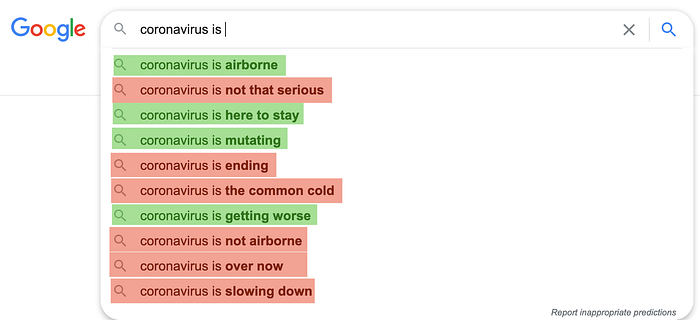

Covid-19 disinfo

There was an inflection in the Covid-19 contamination curve at the end of October. Many people said that it was just due to more testing. At that period, the US election was also looming. At that crucial moment, what was Google promoting with its autocomplete?

Below is what Google autocomplete suggested on October 20th. I put in red the suggestions that have been proven wrong, and green ones that have been proven right.

Once again, these suggestions were completely uncorrelated to search volumes. For instance, according to Google trends, there were ten times more searches for “coronavirus is serious” than “coronavirus is not that serious” (in the month before October 20th). However, the AI chose to display the latter instead of the former!

Medical disinformation has been promoted by Google Autocomplete for years, and has been decried for instance in this post. But it’s mind blowing that it happens right in the middle of a pandemic, while all eyes are anxiously watching.

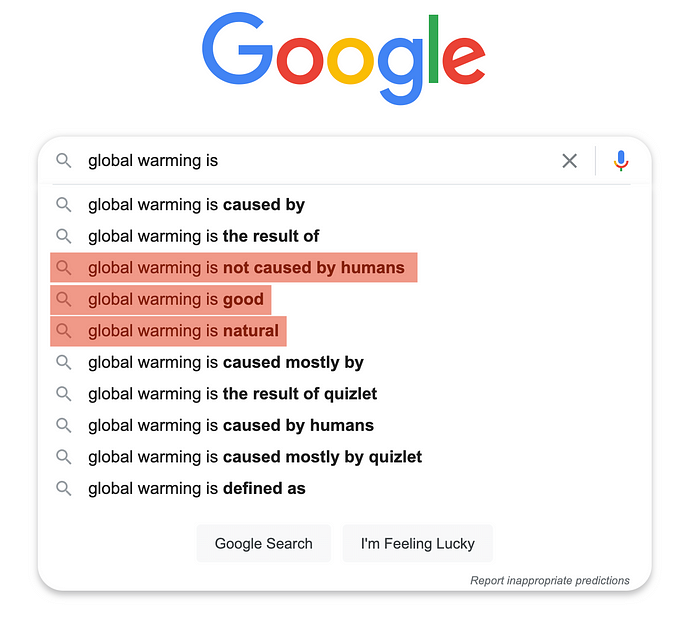

Global Warming Disinfo

Again, in the last month, there were around 5 times more search queries for “Global warming is bad” than “Global warming is not caused by humans” and 3 times more than “Global warming is good”. However, Google only suggests “Global Warming is good”, but not “Global Warming is bad”. “Global warming is bad” doesn’t even appear in suggestions.

Google Autocomplete Disasters are Not New

Many have already happened. For instance, Anna Jobin has been talking about autocomplete of sexist stereotypes since 2013 in this post, and pointed out about the problem of no responsibility.

More recently, the Netflix movie The Social Dilemma started by showing results about autocomplete of global warming. It seemed that Google was giving people what they want to hear at their location, which seem creepy enough. The reality is even worse.

In this post, I want to show that autocomplete is not only amplifying stereotypes, but actually promoting disinformation that is searched less than actual information.

Google’s Autocomplete is not designed to be a “Mirror of Society”

The Google autocomplete is serving the commercial interests of Google, Inc. Like YouTube’s recommendations and Facebook’s group recommendations, Google doesn’t try to give users what they really want or care about. It tries to maximize a set of metrics, that are increasing Google’s profit or its market share. They choose how they configure their AI.

This AI Choices Have Massive Impact

Google serves 3.5 billions queries every day. There are around 10 autocomplete results per query. So there are tens of billions of autocomplete results that are seen every day. They impact society, we just don’t know in which direction.

Transparency Works

Anna Jobin managed to change sexist Google autocompletes by talking about them.

When I talked about YouTube promoting harmful false information in this post in 2017, I received very little reaction. But after a few years of getting media attention in the Guardian, the Wall Street Journal, the New York Times and others, Google changed their algorithms to tackle these issues. These changes resulted in more than 70% reduction in the promotion of harmful content according to Google.

Are those just a few bugs, or is the autocomplete algorithm pushing society towards? We need to monitor at scale Google Autocomplete suggestions, and that’s exactly what we started to do on AlgoTransparency.org.

Guillaume Chaslot, PhD in Computer Science

Ex-Google engineer